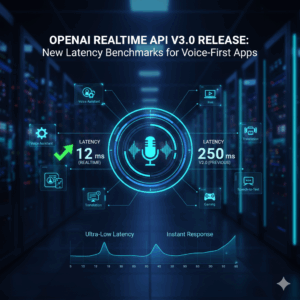

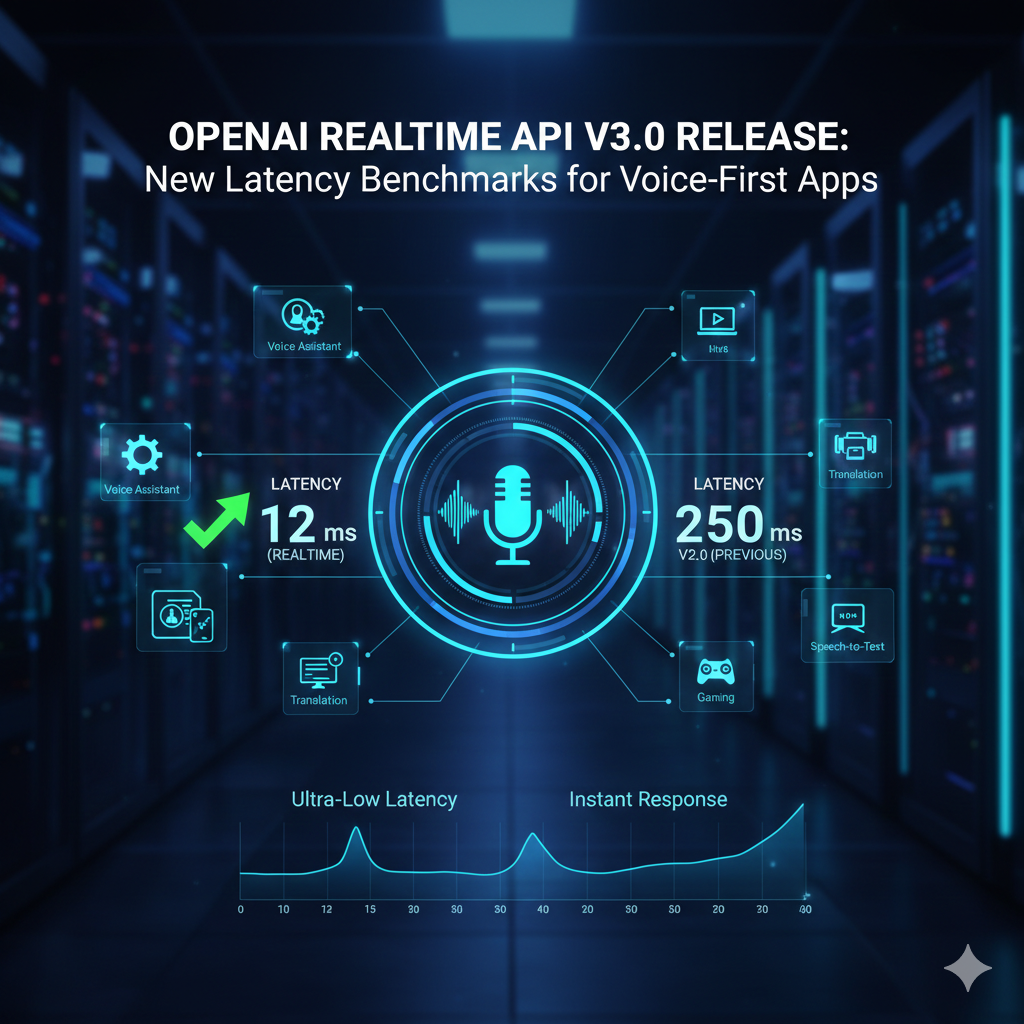

OpenAI Realtime API v3.0 Release: New Latency Benchmarks for Voice-First Apps

OpenAI Realtime API v3.0 Release: New Latency Benchmarks for Voice-First Apps

The introduction of OpenAI Realtime API version 3.0 marks a significant innovation in the way that developers approach the design of voice-first applications, particularly with regard to the speed, responsiveness, and real-time interactivity of the applications. Voice-based systems are very reliant on low latency since even minute delays may interrupt the natural flow of speech and lead users to lose faith in the system. The goal of this new version is to reduce the amount of time that elapses between the user’s speech and the system’s reaction. This will make it possible for AI-driven voice interactions to seem more personalized and less mechanical. In light of the growing number of digital devices that are using conversational interfaces, performance benchmarks are becoming just as crucial as maintaining accurate models. At this point in time, the capability to absorb, comprehend, and react to voice input in a nearly instantaneous manner is no longer a luxury feature but rather a fundamental need. By implementing this enhancement, the Realtime API is now positioned to become a basic element for future speech ecosystems. Additionally, it is a symptom that the industry as a whole is moving toward real-time artificial intelligence infrastructures that promote continuous engagement rather than batch processing.

Voice-First Application Architecture: An Evolutionary Perspective

With the passage of time, voice-first apps have progressed from being straightforward command-based systems to being intricate conversational platforms that demand real-time reasoning and contextual comprehension. Earlier voice systems depended on sequential pipelines, which meant that speech was first transcribed, then analyzed, and then replied to. This caused obvious delays in the process. This design moves toward continuous streaming, which is characterized by the dynamic processing of audio as it is received, and it is made possible by the Realtime API version 3.0. Because of this, computers are able to start producing replies before the user has even finished speaking, which results in a significant improvement in the perception of fast speed. The use of such architectural modifications results in voice interactions that are more conversational and natural in nature, as opposed to transactional. Developers now have the ability to build systems that act more like participants in a conversation rather than inflexible instruments. This progression enables use cases such as live assistants, real-time coaching, and interactive customer assistance to be implemented or supported.

Importance of Latency in Artificial Intelligence Conversational Systems

Due to the fact that it has a direct influence on both the user experience and engagement, latency is one of the most important performance measures in voice-based artificial intelligence. Response times in human communication are often measured in fractions of a second, and any apparent delay might appear strange to the listener. Users can conclude that an artificial intelligence system is malfunctioning or untrustworthy if it takes an excessive amount of time to respond. This latency is the primary emphasis of the Realtime API version 3.0, which aims to construct conversational loops that are more seamless. A decrease in latency makes it easier to take turns, decreases the number of interruptions, and makes the conversation seem more natural. This is of utmost significance in applications such as contact centers, virtual assistants, and real-time translation, all of which are areas in which the rhythm of the discussion is significantly crucial. A competitive benefit rather than just a technical gain, latency minimization is becoming more important as expectations continue to climb.

Processing in a continuous manner and streaming in real time

One of the characteristics that sets the Realtime API version 3.0 apart from its predecessor is its capacity to enable real-time streaming as well as continuous audio processing. This means that the system processes speech in little pieces as it comes, rather than waiting for the whole audio input to be received. By doing so, the artificial intelligence is able to begin thinking and creating replies instantly, rather of waiting until the full phrase has been completed. The end-to-end latency is greatly reduced by continuous processing, which also results in an experience that is more intuitive and participatory. Additionally, it offers capabilities like as interruption management, which allows people to talk over the artificial intelligence while still continuing with a discussion that is logical. When it comes to the construction of fully conversational systems, this kind of streaming architecture is absolutely necessary. As a result, speech AI is brought closer to the communication patterns of actual humans.

Influence on Interactions with Users and Their Experience

Improved user perception and interaction with voice-based apps may be directly attributed to the implementation of real-time processing and lower latency. When replies are provided in a timely manner, users get the impression that the system comprehends their questions and is actively taking part in the subsequent discussion. Trust, contentment, and general engagement with the product are all increased as a result of this. However, even if the responses are true from a technological standpoint, voice systems that reply slowly often give off an unnatural and irritating feeling. As response cycles become more rapid, users are more inclined to continue engaging with the platform and investigate its more in-depth features. Applications such as learning platforms, therapeutic bots, and interactive storytelling are especially equipped to benefit from this. The ability to have a discussion that flows smoothly becomes a critical distinction in digital industries that are highly competitive.

There are advantages for product teams and developers.

The Realtime Application Programming Interface version 3.0 makes the process of developing high-performance speech apps easier for developers. Rather of manually implementing sophisticated latency reductions, a significant portion of the performance management is incorporated directly into the design of the system for enhanced efficiency. The result is that teams are able to concentrate more on the logic of the product, the design of conversations, and the user experience rather than on the low-level infrastructure. A reduction in the requirement for workaround solutions such as filler sounds or fake delays is another benefit of experiencing faster reaction times. Adaptive conversation flows and real-time decision making are two examples of the kinds of dynamic interactions that product teams have the ability to experiment with. Because of this flexibility, development cycles are brought to a faster pace, and more new product concepts are supported. In the end, the application programming interface (API) lowers the technological obstacles that prevent the development of sophisticated speech systems.

Integration of Multiple Technologies and Prospective Interaction Models

Additionally, the Realtime API version 3.0 is able to accommodate the rising trend toward multimodal interaction, which involves the combination of speech with text, graphics, and contextual data. The ability to talk while simultaneously referencing visual information or getting visual feedback is made possible as a result of this; it allows richer experiences. As an example, a voice assistant may provide a description of a picture, direct a user via a visual interface, or provide responses to spoken inquiries about material that is shown on a screen. Voice alone does not provide interactions that are as immersive and intuitive as those created by multimodal systems. The combination of numerous data streams also enables artificial intelligence to have a more profound understanding of context. This integration is a representation of the future of human-AI interaction, which will enable communication to become more natural and versatile across a variety of forms going forward.

Real-time voice system design presents a number of challenges.

Even if there have been advancements, the construction of real-time speech systems still presents a number of technological and architectural obstacles. System performance may be impacted by a variety of factors, including network circumstances, device limits, and background noise. In order to ensure that users have a consistent experience, developers need to carefully control the audio quality, speech recognition, and reaction time. While disruptions and misunderstandings are more likely to occur in live encounters, real-time systems also need effective error handling in order to function properly. The difficulty of striking a balance between speed and accuracy continues to be a significant one, particularly in complicated conversational contexts. In addition, there is the problem of scalability, which arises from the fact that real-time systems need a greater amount of processing resources than conventional text-based models. In order to overcome these problems, deliberate system design and continuous performance monitoring are required.

Strategic Implications for the Artificial Intelligence Industry

A more widespread movement in the artificial intelligence sector toward real-time, interactive intelligence is reflected in the introduction of Realtime API version 3.0. In place of static tools that only react to specific instructions, artificial intelligence systems are evolving into agents that engage in continuous interaction. This has strategic repercussions for a variety of businesses, including customer service, education, healthcare, along with the entertainment industry. With the use of real-time speech technology, businesses are able to provide services that are more customized and responsive. The assumption that future artificial intelligence products would have immediate interaction as the default norm is also raised as a result of this. As capabilities in real time continue to advance, artificial intelligence will increasingly act as a digital presence rather than a utility operating in the background. This signifies a shift away from AI tools that are passive and toward conversational systems that are active.

How Voice-First Applications Will Develop in the Future

Taking a look into the future, voice-first apps will continue to develop as latency continues to reduce and interaction models continue to grow further complex. It may be possible for future systems to anticipate the user’s intentions before a phrase is finished and to modify replies in real time. It is expected that voice interfaces will include emotional signals, tone analysis, and contextual awareness in order to generate communication that is more on par with human speech. It is possible that speech may replace many conventional ways of input, such as typing and clicking, if real-time artificial intelligence becomes more reliable. How people engage with digital goods across different platforms will be reimagined as a result of this transition. Realtime Application Programming Interface version 3.0 acts as an early basis for this change. This is a portent of a future in which speech will serve as the principal means of communication between intelligent systems and human beings.